Note: 2025 ETHTaipei

Author: York

ETHTaipei is a two-day blockchain event held on April 1st and 2nd, and it stands as one of Taiwan’s premier annual Ethereum gatherings. This article is a condensed summary of the key takeaways from my participation in ETHTaipei. Although limited time and space made it impossible to cover all the exciting content, I’ve done my best to organize and present the highlights of what I saw and learned. I hope this summary will be helpful to others and offer some insights to those who couldn’t attend in person.

This article will cover several key topics:

Community & Ecosystem Development: Exploring the future direction of the Ethereum community, including the Foundation’s new vision and funding models for public goods.

Privacy & Zero-Knowledge Proofs (ZK): Sharing the latest advancements in ZK technology and its practical applications.

DeFi & Economic Models: Analyzing the economic incentives and innovative models within the DeFi ecosystem, including MEV handling mechanisms and real-world profit opportunities.

Core Technologies & Protocol Upgrades: Introducing recent major upgrades to the Ethereum protocol and related developments.

Blockchain Security: Discussing key blockchain security topics, including smart contract vulnerability analysis and formal verification tools.

ETHTaipei is a major gathering for blockchain enthusiasts. In addition to attending talks, many participants take the opportunity to connect, share updates, and promote the technologies they’re building. What made this year especially exciting was that ETHGlobal came to Taiwan for the first time, co-hosting a large-scale blockchain hackathon with ETHTaipei. This collaboration brought even more energy and richness to the event.

The following sections summarize talks related to specific topics. Not all sessions are covered — not because they weren’t valuable, but simply due to time constraints that made it impossible to attend every talk and activity. If you happened to attend other sessions, feel free to share your takeaways in the comments!

There were also many excellent workshops this year that I, unfortunately, couldn’t attend. One example is the session on how ZKEmail works, which I’m still very curious about.

The sessions related to these topics are as follows:

Community & Ecosystem Development

Vitalik Keynote

Public Goods + Ethereum Future

Privacy & Zero-Knowledge Proofs (ZK)

ZK Verifiers Exposed: Lessons from Real Bugs and Fixes

May the Proof Be With You: Fighting Sybils with Self Protocol

DeFi & Economic Models

MEV Tax in Action: Internalizing MEV in UniswapX

Boring Things in DeFi that Actually Make Money

Why have we given up on algorithmic stablecoins?

Core Technologies & Protocol Upgrades

EIP-7702 on Ethereum and Rollups

Why ERC-4337 Isn’t That Simple in Layer 2

EIP-7784: GETCONTRACT opcode

Validation Centric Design: agile and safe development for devs, an interchangeable AA wallet for users

Blockchain Security

Exploring AI’s Role in Smart Contract Security

Empowering Everyone: Taking Specialization Out Of Formal Methods

The main content begins below:

1. Community & Ecosystem Development

1.1. Vitalik Keynote

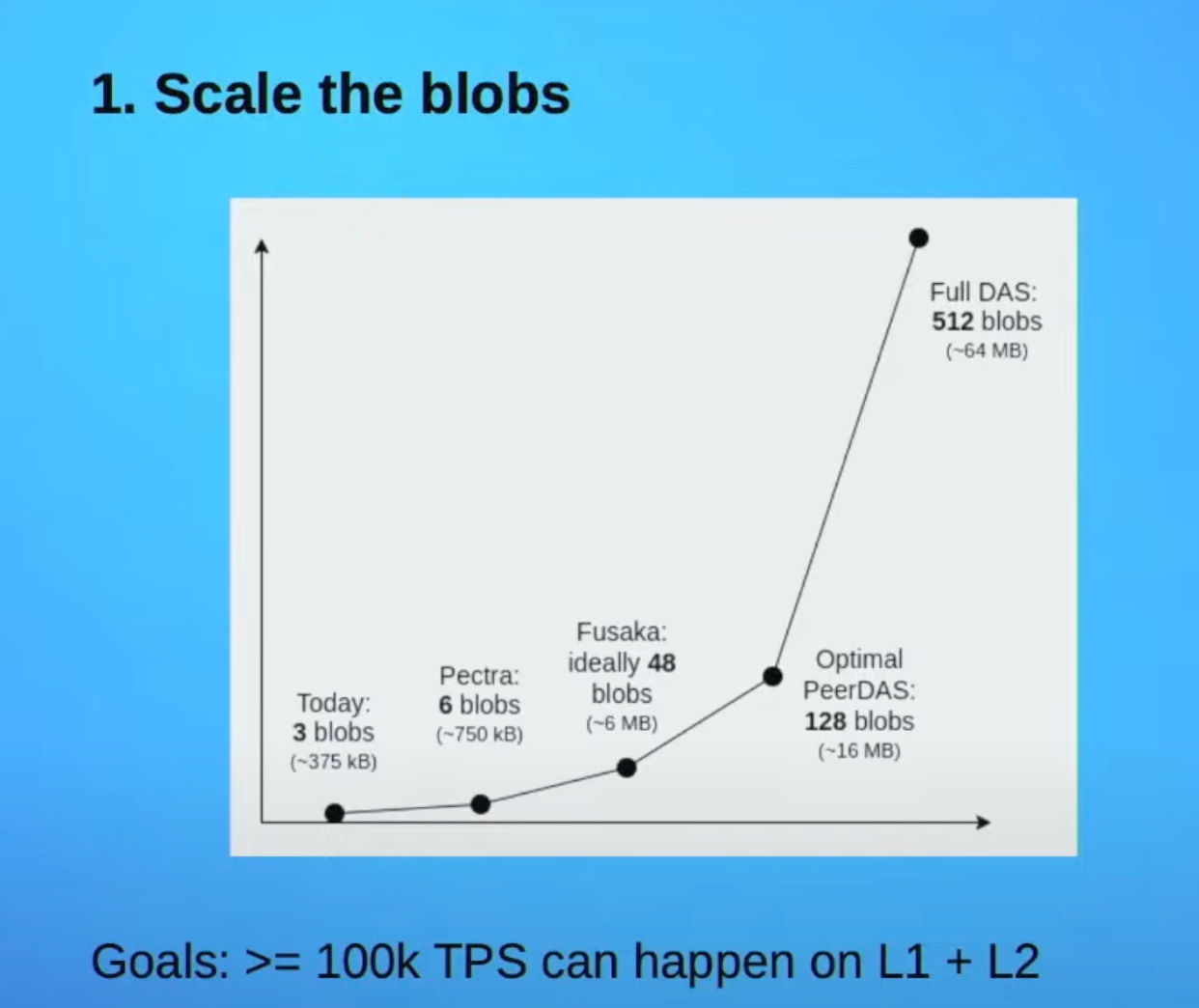

In his talk, Vitalik outlined Ethereum’s roadmap as it evolves from an experimental technology into a global settlement layer, emphasizing that Layer 2 is the key to future scalability. He reviewed the evolution from Plasma to Rollups and explained how EIP-4844 introduces blob data to significantly reduce costs and increase TPS.

Vitalik also stressed the importance of avoiding centralization risks in Layer 2 security. He introduced a new architecture that combines zero-knowledge (ZK) proofs with trusted execution environments (TEE), enabling withdrawals to be completed within an hour. Looking ahead, he envisions Layer 2 achieving sub-12 second finality and supporting native cross-chain asset transfers. This talk provided a clear blueprint for Ethereum’s scaling journey and called on developers to help build a decentralized future together.

Layer 2 protocols have grown from early-stage experiments into critical infrastructure, expanding Ethereum’s transaction capacity by approximately 17 times and significantly reducing transaction fees. However, two key challenges remain: Data Availability and Heterogeneity. The current blob space is sufficient for present demands, but as more applications emerge, it may become a bottleneck. Additionally, the lack of standardization across different L2 protocols leads to composability issues and inconsistent user experiences, highlighting the need for better coordination and standardization.

In the future, Ethereum may introduce the Data Availability Sampling (DAS) mechanism to address the blob space limitations. Currently, every Ethereum node is required to store the full content of all blobs. Under DAS, data would be sharded, meaning each node only needs to store a small portion of the total blob data. This would significantly enhance Ethereum’s TPS.

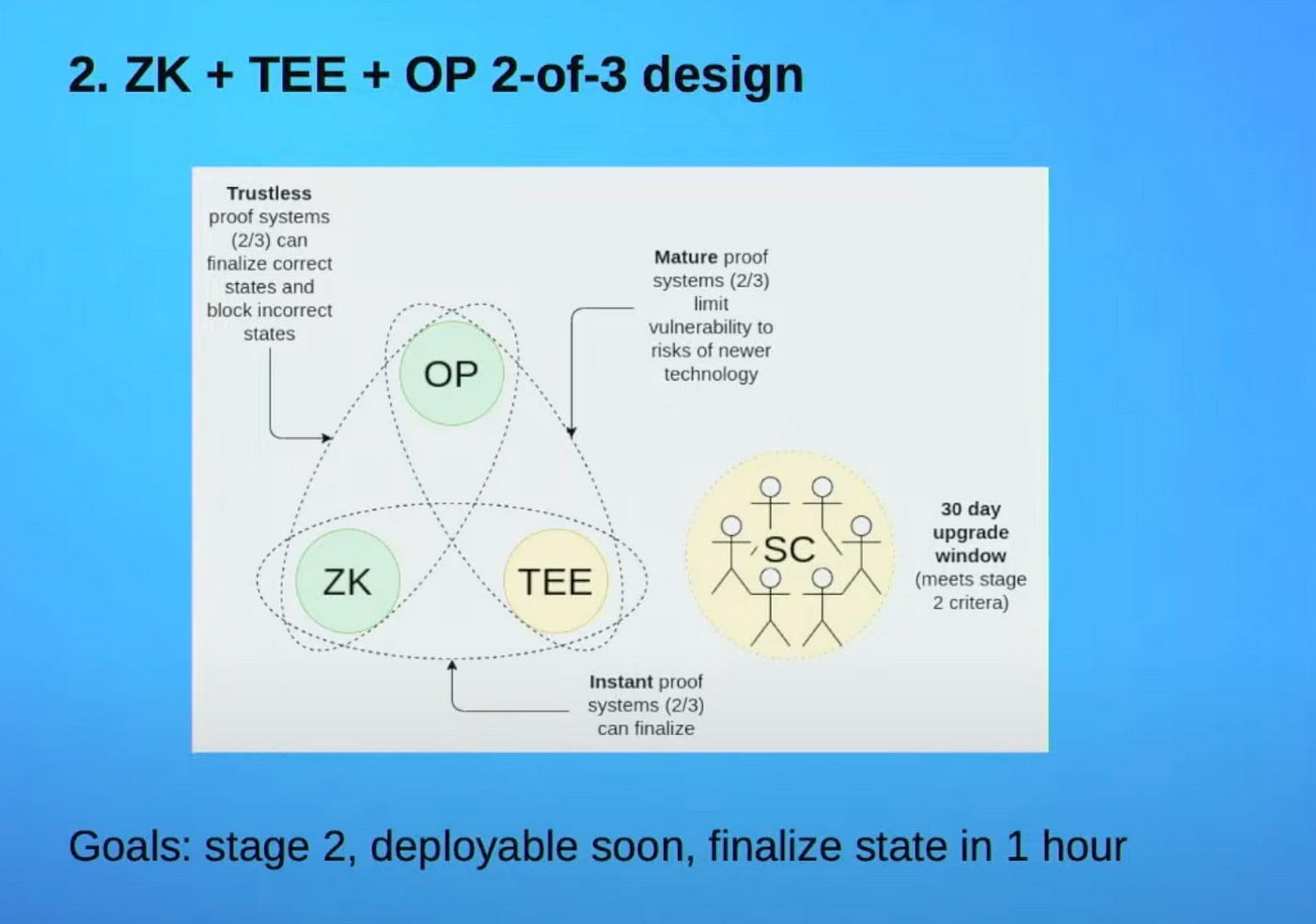

Vitalik proposed a design that combines three different proof systems to enable fast withdrawals on Layer 2. This design follows a “two-out-of-three” model, incorporating Optimistic, ZK, and Trusted Execution Environments (TEEs).

In typical scenarios, when a Layer 2 block is submitted, it can immediately be accompanied by both a ZK proof and a TEE proof. Since two proof systems are available, the block can achieve instant finality. The Optimistic Rollup acts as a third line of defense. If there’s a disagreement between ZK and TEE, the Optimistic system serves as the final arbiter.

The goal of this architecture is to enable final confirmation within one hour. While ZK proofs provide real-time verification, Optimistic proofs require a 7-day challenge window, and over-reliance on TEEs could lead to centralization risks. By combining all three, this design allows their strengths to complement each other.

Reference:

1.2. Public Goods + Ethereum Future

In the opening keynote of Day 2, Vitalik delved into the central role of open source and public goods within the Ethereum ecosystem. He showcased a wide range of critical open-source projects that support Ethereum — created by Foundation members, companies, non-profit organizations, and independent volunteers. He emphasized that without open source, Ethereum would not be able to build trust, and it would be far more difficult to foster collaboration and innovation.

Vitalik described open-source software, documentation, educational resources, and research as forms of digital public goods. He called on the community to think about how to create a self-sustaining value flywheel, where the success of open-source projects fuels the next generation of builders, rather than relying on a single centralized organization with limited resources.

He also explained the definitions of public goods and open source, noting their conceptual similarities with some subtle differences. Public goods, in economic terms, refer to resources or projects that benefit a broad population and cannot effectively exclude non-paying users. Open source, on the other hand, is a licensing model that legally permits anyone to freely use, reproduce, modify, and redistribute the software. In short, open source is a method of implementation, while public goods represent a value-driven goal.

Vitalik also noted that open source is not a magic solution — if the underlying design is flawed, open-sourcing it won’t necessarily lead to positive outcomes. He referenced his own article, “Making Ethereum Alignment Legible”, to emphasize that “Ethereum Alignment” should involve higher-level standards — specifically, whether a project truly brings positive impact to users and the world at large.

There are four key elements for achieving meaningful alignment:

Open source: Core infrastructure should adhere to free software and open-source definitions.

Open standards: Follow or co-develop standards like ERC-20 and ERC-1271 to promote interoperability.

Decentralization and security: Avoid centralized trust by evaluating system resilience through the “walkaway test” and “insider attack test”.

Positive-sum: For Ethereum: Use ETH as the native token, contribute to open-source technologies, and commit a portion of revenue to support ecosystem public goods. For the broader society: Promote financial inclusivity, support non-Ethereum public goods, and develop technologies with broad applicability.

Reference:

2. Privacy & Zero-Knowledge Proofs (ZK)

2.1. ZK Verifiers Exposed: Lessons from Real Bugs and Fixes

This session was presented by Tejaswa Rastogi from ZKsync, focusing on security issues in zero-knowledge proof (ZK) verifiers. He shared several real-world vulnerabilities he has encountered, which can be broadly categorized into the following common issues:

Trusted Setup Risks: If the Tau from the trusted setup is not properly destroyed, it could be exploited by attackers. Using multi-party computation (MPC) is recommended to avoid single points of failure.

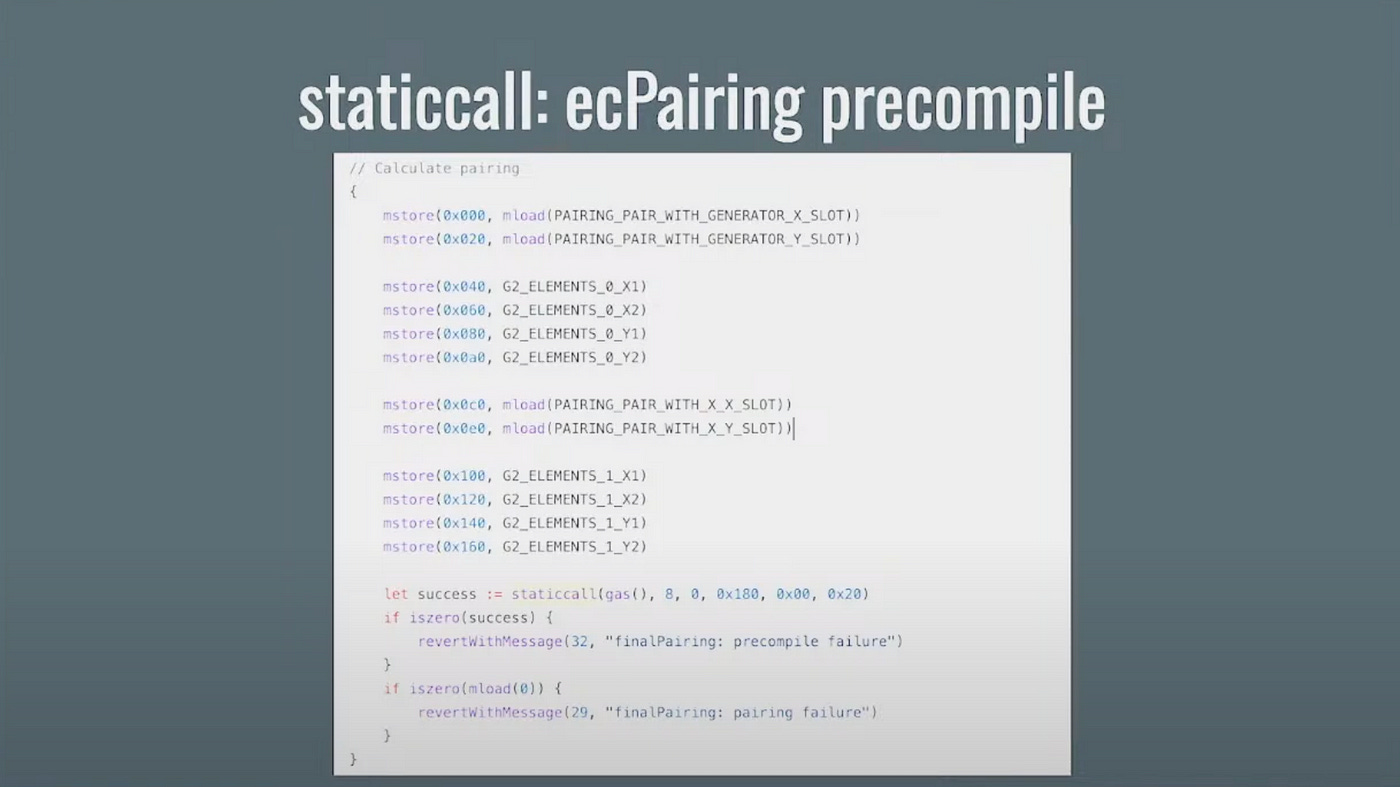

Insufficient staticcall Checks: Verifiers are often written in Yul, and if the success status or return values of staticcall (such as results from EC pairing checks) are not properly validated, it may result in incorrect proofs being accepted.

Missing Range Checks: If proof elements and public inputs are not constrained within the field, they may be exploited for overflows or forgery. Strict range checks must be enforced. A ZK proof typically contains many components, such as evaluations at a specific point (Zeta), like proof_L(zeta) and proof_R(zeta). If elements are not restricted to the field, an attacker could exploit this to perform overflow operations, pushing values outside the valid domain. Due to the nature of modular arithmetic, these out-of-bound values may still appear valid, potentially causing the verifier to misinterpret data or enabling the attacker to forge proofs by generating equivalent-looking results through alternative means.

Lagrange Evaluation Vulnerability: If Zeta is a root of unity, the denominator may become zero, leading to invalid evaluations. This requires special handling or a secure alternative implementation. When ζ is a root of unity, ζⁿ − 1 = 0, which causes the numerator to become zero and incorrectly results in Lj(ζ) = 0, violating the properties of Lagrange polynomials. In such cases, the verifier should switch to an alternative Lagrange interpolation formula to ensure correctness.

formula:

Lj(ζ) = ω^i / n * (ζⁿ — 1) / (ζ — ω^i) — — (1), the less robust formula

Lj(ζ) = yj * ∏(ζ — xm)/(xj — xm) ; 0 ≤ m ≤ k, m ≠ j — — (2), the recommended formula

Fiat-Shamir Vulnerability (Frozen Heart): The core issue of this vulnerability lies in some PlonK implementations failing to include all public inputs in the hash computation during the Fiat-Shamir transformation. As a result, an attacker can craft malicious public inputs to forge a proof that the verifier will incorrectly accept. This type of attack has been confirmed in multiple PlonK implementations, including Dusk Network’s plonk, Iden3’s SnarkJS, and ConsenSys’ gnark. To prevent this, it is crucial that all relevant public inputs are included in the Fiat-Shamir hash computation, ensuring the proof cannot be tampered with or faked by an attacker.

Reference:

https://diligence.consensys.io/audits/2023/06/linea-plonk-verifier/

https://blog.trailofbits.com/2022/04/18/the-frozen-heart-vulnerability-in-plonk/

2.2. May the Proof Be With You: Fighting Sybils with Self Protocol

This session was presented by Marek Olszewski from Celo, focusing on ZK verification in the Self Protocol. I was particularly interested in this session because their team offered a significant number of prizes at ETHGlobal, and I wanted to see what they were truly capable of.

Celo was previously an Ethereum-compatible Layer 1, but just a week before ETHTaipei, it officially transitioned to a Layer 2, marking its return to the mainstream Ethereum ecosystem. However, there are still areas that need improvement . For instance, gas fees are still paid in CELO, which remains an issue.

The Self Protocol is also a recently launched technology, which explains why the team is actively promoting and pushing it forward.

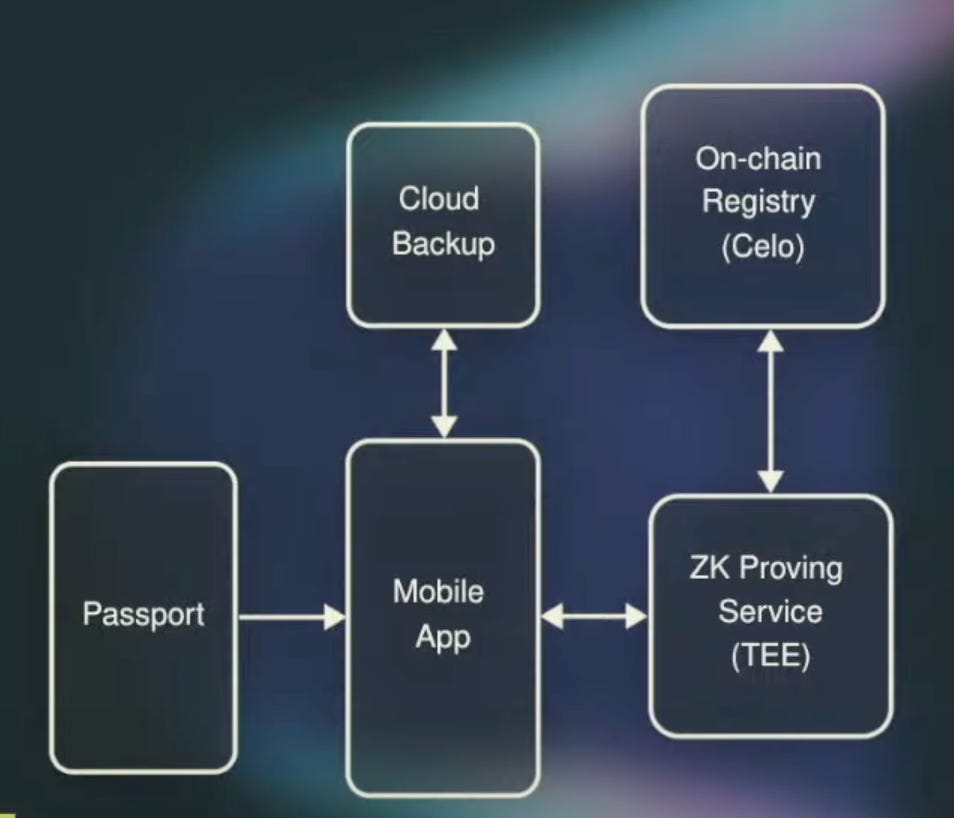

NFC Reading: The Self Protocol application can read data from the NFC chip embedded in biometric passports.

Secret Generation and Backup: To protect users from being tracked by the country that issued their passport, especially regarding their DApp usage, Self Protocol generates a unique secret for each passport and backs it up to iCloud or Google Drive.

Trusted Execution Environment (TEE): Since current proof systems are not efficient enough to run quickly on mobile devices, Self Protocol uses TEE to accelerate proof generation. TEE is a secure hardware environment that provides verifiable attestations of code execution. Only after verifying the security of the TEE does the application send the encrypted passport data to it for proof generation, after which the data is deleted.

Gas Fees on Cell Chain: For use cases that require writing proofs on-chain, Self Protocol utilizes its built-in Sybil resistance mechanism to cover gas fees on behalf of users, allowing them to interact without connecting a wallet.

Self Protocol is developed by Self Labs and the Open Passport team. It is built on ZK-SNARKs and biometric passports, using NFC to read passport chips. Through zero-knowledge proofs and selective disclosure, it verifies identity attributes, allowing users to prove they are unique, real individuals without revealing sensitive personal information.

Self Protocol has several key features:

Sybil Attack Resistance: By binding identities to real passports, it significantly increases the cost and difficulty of creating large numbers of fake identities.

Bad Actor Detection: The protocol can verify whether a user is on a sanctions list or other bad actor lists — without revealing the user’s actual identity.

Human vs. AI Differentiation: It helps determine whether the interacting party is a real human or an AI agent.

Self Protocol supports two types of proof verification:

1. Off-chain verification

2. On-chain verification, which is currently limited to the Celo blockchain, making its usage somewhat constrained for now.

Reference:

3. DeFi & Economic Models

3.1. MEV Tax in Action: Internalizing MEV in UniswapX

This session was presented by Alan Wu from Uniswap Labs, where he shared how UniswapX is designed to return value from arbitrage bots back to users, aiming to improve the trading experience and enhance the fairness and efficiency of DeFi protocols.

UniswapX is an intent-based trading design aimed at simplifying the user experience, reducing transaction costs, and decoupling matching from execution. Unlike traditional Uniswap, UniswapX facilitates trades through intents: users simply sign a gas-free message indicating the token they want to trade and the minimum output they expect. Using Permit2, users authorize token transfers, and UniswapX then searches for the most favorable swap path. Once matched, the executor submits the transaction on-chain.

What is MEV?

Transactions on the blockchain are processed in block order, which often causes AMM pricing to lag behind real market conditions, creating opportunities for Maximal Extractable Value (MEV).

Block proposers and builders exploit transaction ordering to extract this value, while users are forced to pay priority fees to compete for favorable execution. As a result, value that should have gone back to users is instead lost.

What is MEV taxes?

MEV taxes are a protocol-level design intended to redirect a portion of the profits that would traditionally go to block proposers or searchers back to the transaction initiators or the protocol itself. Built on top of the already competitive priority fee market (i.e., the MEV searcher market), these mechanisms enforce a tax proportional to the priority fee paid by searchers. This allows the protocol to capture revenue from MEV competition. The higher the MEV, the fiercer the competition among searchers — and the greater the MEV tax that can be redistributed to users. Protocols can also implement additional compensation mechanisms based on the user’s priority fee, such as issuing extra tokens or offering slippage refunds, to offset the cost of higher fees.

How MEV taxes works?

The prerequisite for implementing MEV taxes is that the chain must sort transactions by priority fees, as is the case with networks like Base and Unichain.

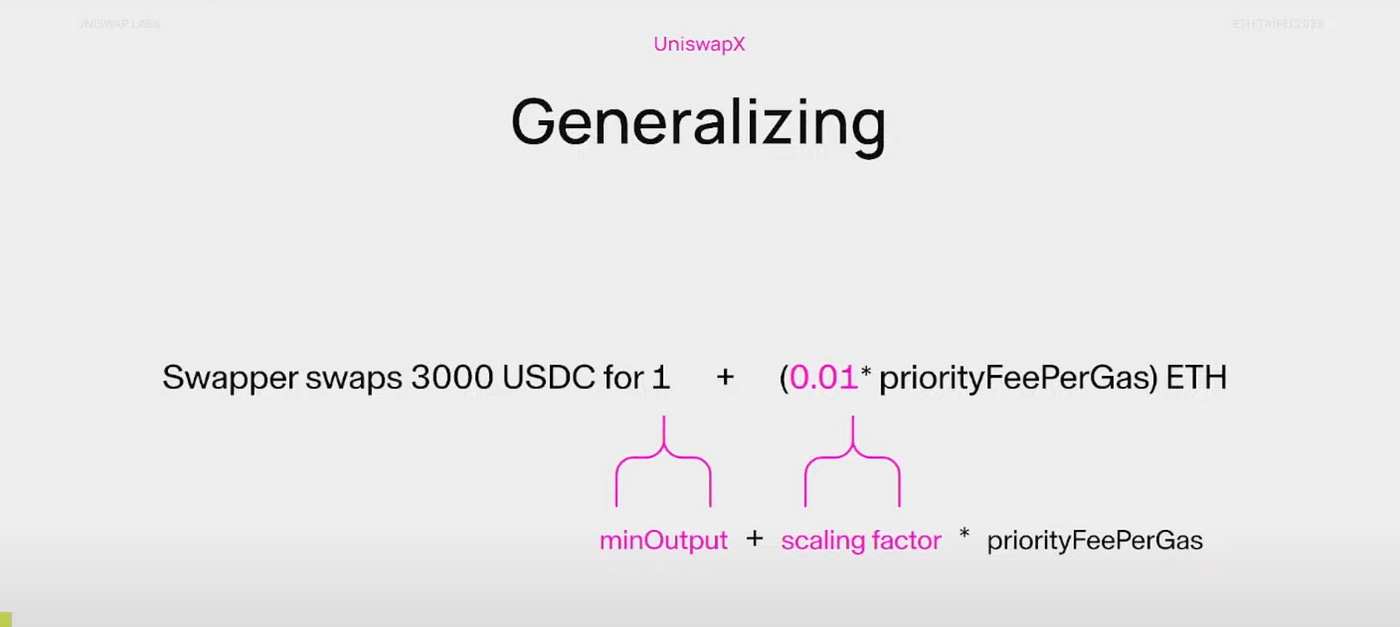

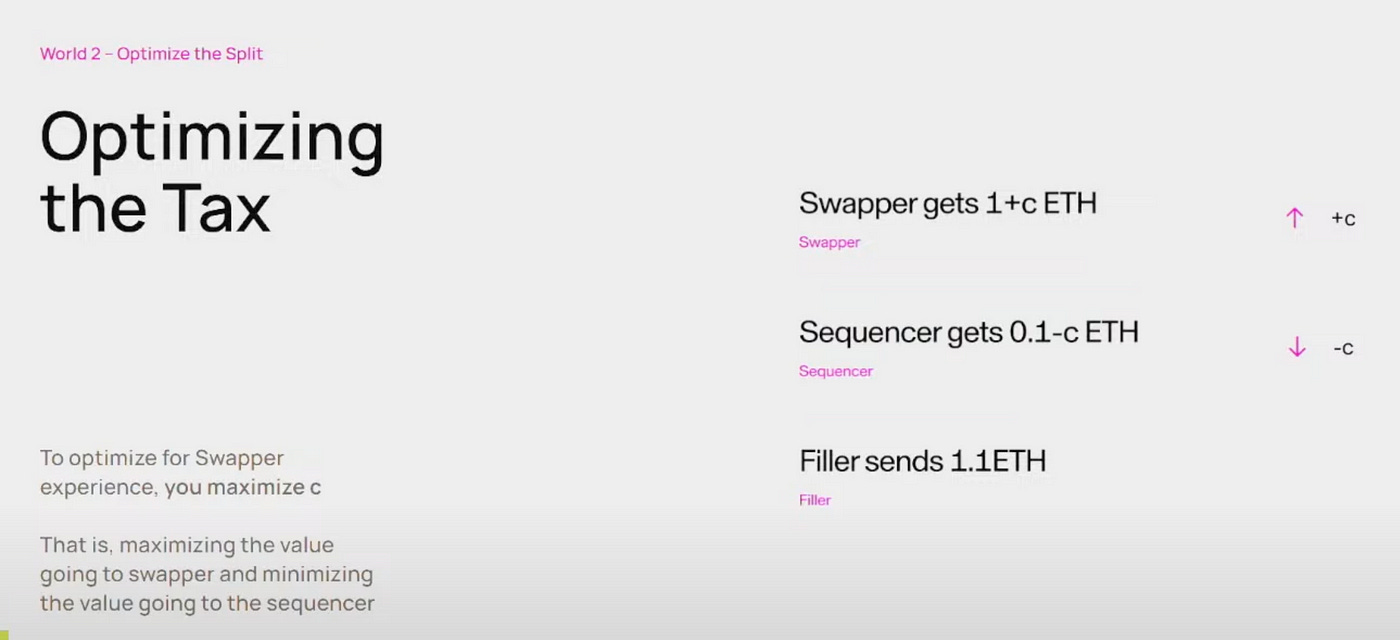

UniswapX introduces a formula, shown in the diagram, that incorporates priorityFeePerGas to reduce the profit available to the sequencer and increase the number of tokens the swapper actually receives. For example, suppose a user wants to swap 3,000 USDC for ETH, and sets a minimum expected output (minOutput) of 1 ETH. If a Filler is willing to pay a higher priorityFeePerGas, the user can receive additional ETH as output. This priorityFeePerGas is multiplied by a scaling factor (0.01) to return a portion of the profit to the swapper. This design incentivizes Fillers to bid higher in priority fees, while helping users recover value that would otherwise be entirely captured by MEV extractors.

To ensure that the swapper receives more tokens instead of letting the sequencer capture the price difference, the most intuitive approach is to make the scaling factor as high as possible. Originally, the swapper might receive only 1 ETH, but with a scaling factor added, they could receive 1 + c ETH. This boosts the swap rate from 90% to over 90%, potentially even up to 95%, significantly improving swap efficiency.

However, a larger scaling factor isn’t always better due to the issue of granularity. When the scaling factor is too large, Fillers’ bids fall into discrete rebate steps, making it impossible to respond to small price differences, which can lead to failed swaps.

To address this, UniswapX keeps the scaling factor very small, using the formula:

minOutput * (1 + priorityFee × mpsPerPriorityFeeWei / MPS),

where MPS is in milli-percentage points (0.00001%). This allows Fillers to compete even on very small price differences, ensuring that opportunities aren’t lost due to overly coarse bidding units.

Reference:

3.2. Boring Things in DeFi that Actually Make Money

This session was presented by Terence An from Hyperplex, where he shared how to make money effortlessly — proving once again that in web3, everyone seems to be a money-making expert, casually earning while doing nothing.

He emphasized that an ideal investment strategy should be “as boring as watching grass grow or paint dry.” The key lies in building an automated system, rather than chasing high-risk, high-reward opportunities.

Core belief: Long-term stability is better than short-term thrills.

Four key elements to make your strategy “more boring”:

Position Sizing: Use methods like the Kelly criterion to determine the proportion of each strategy in the investment portfolio.

Diversification: Spread exposure across different tokens, protocols, timeframes, and liquidity conditions.

Risk Hedging: Use long/short positions, stop-loss settings, and other tactics to reduce directional and single-asset risks.

Attention Management: Establish consistent monitoring and rebalancing mechanisms to avoid emotionally driven decisions.

Bera / ETH Arbitrage Strategy

Long high-yield tokens (Bera), short highly correlated ETH

Hold USDC at the same time to reduce directional risk

Key emphasis: position sizing, risk measurement, and rebalancing rules — all are essential

Since I’m not an investment expert, I’ve just listed a few key points. If you’re interested, feel free to check out his full talk. Whether or not it’s useful is up to you.

3.3. Why have we given up on algorithmic stablecoins?

This session was presented by Drew from Pinto. The talk wasn’t about encouraging people to abandon algorithmic stablecoins, but rather to approach them with an open mind. Nowadays, the term “algorithmic stablecoin” often triggers strong aversion, and understandably so given the experiences with Ponzi-like schemes such as Terra/Luna. It’s hard to regain trust in this concept. Interestingly, before 2021, algorithmic stablecoins were actually a highly praised idea in the space.

I did a bit of research on the company — Pinto is a project that sits somewhere between an algorithmic stablecoin and a collateral-backed stablecoin. I also noticed they have a bug bounty listed on Immunefi, which is worth checking out.

I feel this can be explained from several perspectives:

The Importance of Stablecoins

Stablecoins remain essential on-chain as they serve as the core foundation of DeFi and everyday transactions. The total circulating market cap has reached $220 billion, nearly equivalent to Ethereum’s market cap, highlighting their crucial role in asset pricing, liquidity, payments, and risk hedging.

Types of Stablecoins

Centralized Stablecoins: Examples include USDT and USDC. While large in scale, they face centralization issues such as censorship, trust assumptions, and government oversight.

Decentralized Stablecoins: These are mostly overcollateralized, like DAI. While decentralized, they suffer from inefficiencies and limited scalability.

Types of Algorithmic Stablecoins

Reserves-Based: The protocol holds reserve assets (e.g., its own token, BTC, ETH). When the stablecoin’s price drops below its peg, it uses buyback mechanisms to support the price.

Credit-Based: Users “borrow” assets from the protocol, and price stability is maintained based on trust in the system’s future repayment ability — similar to modern banking systems. Credit-based models do not rely on reserve assets, offering more flexibility and scalability. Pinto falls into this category.

Reference:

4. Core Technologies & Protocol Upgrades

4.1. EIP-7702 on Ethereum and Rollups

This session was presented by Martin Derka from Zircuit, where he introduced the design principles, implementation, use cases, and security risks of EIP-7702.

EIP-7702 is a proposal that will be included in Ethereum’s upcoming Pectra upgrade. It allows an EOA (Externally Owned Account) to be temporarily delegated to a smart contract, essentially adding a contract interface to your address — while still retaining full control via the original private key.

Meaning of Set Code

Set Code is an instruction that allows an EOA (Externally Owned Account) to delegate its execution logic to a smart contract.

Once a contract code is set on the account, it exposes the interface of that smart contract, allowing anyone to interact with it as if it were a regular contract.

When executed, the logic runs using the EOA’s own storage as context, meaning all state changes occur on the EOA itself, not on the delegated contract.

Use Cases

Authorization Mechanism: Implements an authorization model similar to ERC-20 approve, allowing accounts to delegate permission for specific logic execution.

Universal Executor: Enables users to delegate their EOA to a contract that can centrally handle multiple DeFi invocation logics.

Relayer: Allows users to have their transactions submitted by a third party, hiding transaction details such as gas and nonce. Users only need to provide a signature, and the relayer can send the transaction on their behalf.

Security?

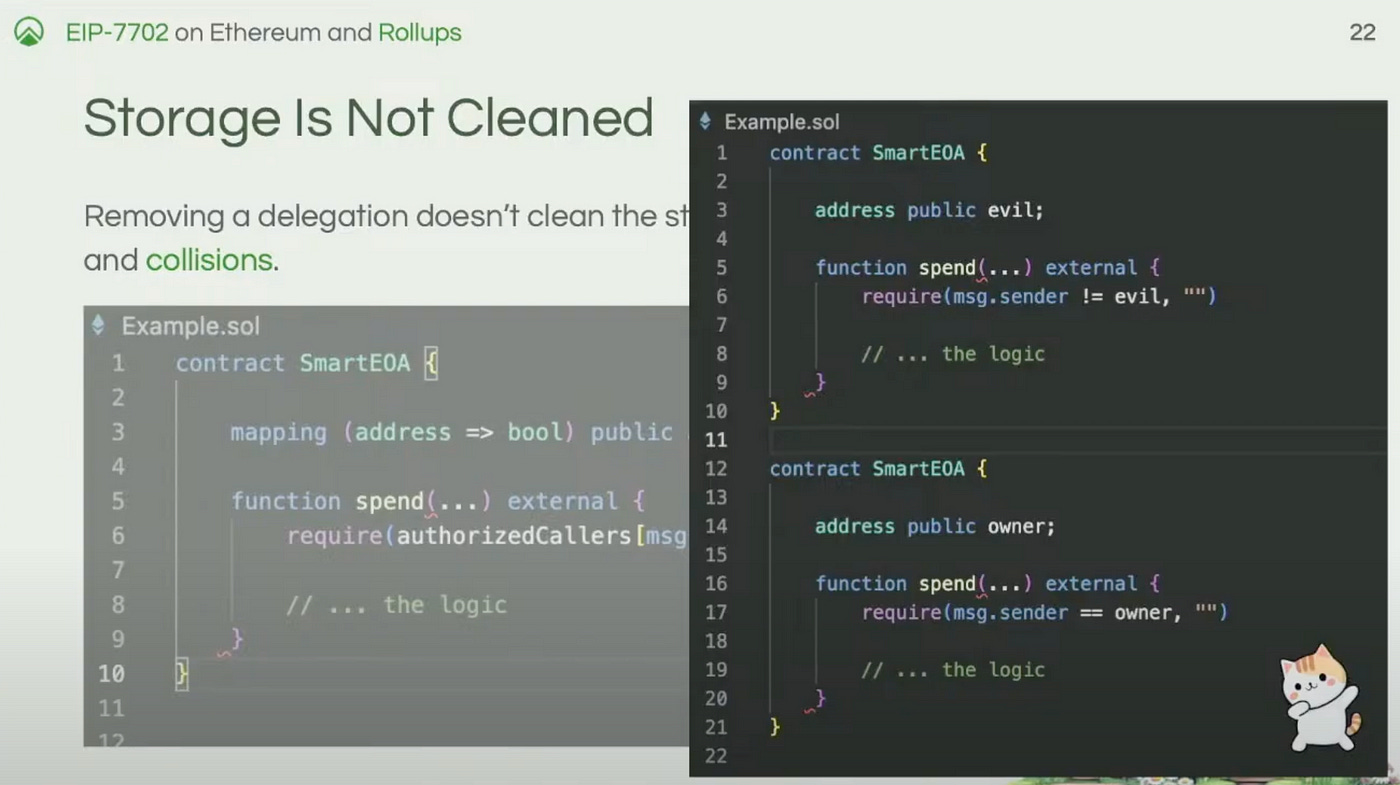

Storage Management: When delegation is removed, the EOA’s storage is not cleared, which could lead to issues if delegation is later re-applied. One solution is to separate storage by using a key-value structure, and reset the corresponding storage slot during initialization.

Risks from Malicious Contracts: Users may be tricked into delegating their account to a malicious contract. Similar to how Permit2 improves convenience while increasing phishing risks, this mechanism could also expose users to more attacks.

Impact on Existing Contracts: The introduction of EIP-7702 may affect existing contracts that rely on the condition

msg.sender == tx.origin. When an EOA delegates its functionality to a contract,msg.sendercan still be the EOA address, andtx.originremains the original EOA. As a result, contracts that use this condition to exclude contract calls may fail, becausemsg.sender == tx.originno longer guarantees that the caller is not a contract.

Security Design Recommendations:

1. Include call batch data in calldata, sign it, and verify the signature.

2. Add anti-replay mechanisms, such as incorporating the chain ID.

3. Use a nonce to prevent the same command from being executed multiple times.

4. Implement a global invalidation nonce to revoke previously signed transactions.

Reference:

4.2. Why ERC-4337 Isn’t That Simple in Layer 2

This session was presented by Alfred Lu from imToken Labs, focusing on the challenges of implementing ERC-4337 on Layer 2. The application of ERC-4337 on Layer 2 faces multiple challenges, including protocol differences, authorization and synchronization issues, address consistency, complexity of PVG (PreVerificationGas) calculation, and support from infrastructure and tools.

Protocol Differences & Asynchronicity

Different Layer 2s, such as Arbitrum, Optimism, and zkSync, vary in their EIP/RIP support, hard fork schedules, opcode implementations, gas structures, and fee mechanisms. These differences lead to inconsistent implementations of ERC-4337 across chains. Additionally, a single transaction cannot simultaneously update account states across multiple chains, making cross-chain consistency difficult.

Authorization & Synchronization in Multi-Chain Accounts

Traditional EOAs share the same address and private key across different chains, but Account Abstraction (AA) accounts may have different addresses and authorization methods on different chains. For example, a successful transferOwnership() on one chain and a failure on another can cause unsynchronized account states, increasing management complexity.

Address Consistency Issues

Users generally expect to have the same address across different chains, but variations in contract deployment environments can lead to address mismatches, impacting both user experience and security. For instance, differences in opcode support (e.g., PUSH0) can cause contract addresses to diverge, even if the source code is identical.

PVG (PreVerificationGas) Calculation Complexity

On Layer 2, transaction costs must account for both the security costs of Layer 1 and the processing costs of Layer 2. A standard on-chain transaction handles the full lifecycle of a UserOperation, including both the verification and execution phases. However, not all gas costs are covered by VerificationGasLimit and CallGasLimit.

The additional overhead that cannot be accurately measured on-chain using gasleft() is captured by preVerificationGas. Since the total cost of a transaction includes not only Layer 2 gas usage but also the calldata cost of submitting data to Layer 1, the overall fee calculation becomes significantly more complex.

The total cost for a Bundler to process a transaction includes both the Layer 1 security cost and the Layer 2 execution cost.

TotalCostForBundleTx = L1Cost + L2Cost

= L1GasPrice * L1CalldataSize + L2GasPrice * L2GasUsedPVG (PreVerificationGas) refers to the gas cost that covers parts which cannot be dynamically measured during the verification or execution phases.

PVG = PVGForL2 + PVGForL1The final complete gas formula is as follows:

TotalGasUsed = (VerificationGasLimit + CallGasLimit) + PVG

= (VerificationGasLimit + CallGasLimit + PVGForL2) + PVGForL1

= L2GasUsed + L1SecurityFeeChallenges in Infrastructure and Tooling Support

Some Layer 2s (such as Scroll) may not fully support Ethereum’s precompiled contracts, which can affect the implementation of certain contract logic. Development tools like Foundry and Slither also require time to update after hard forks, which means that deploying contracts or upgrading products during this period may carry risks.

Reference:

4.3. EIP-7784: GETCONTRACT opcode

This session was presented by Tim Pechersky from Peeramid Labs, introducing EIP-7784. EIP-7784 enables bytecode hash–based contract indexing, establishing a trustworthy and immutable standard for referencing contracts on Ethereum. It aims to improve development efficiency, reduce deployment costs, and enhance security and interoperability.

What is GETCONTRACT opcode?

GETCONTRACT is a new EVM opcode proposed in EIP-7784. Its purpose is to provide a standardized, verifiable, and decentralized way to look up the address of a deployed contract based on its bytecode hash. You can think of GETCONTRACT as a DNS lookup system for smart contracts.

Ethereum Distribution System

The Ethereum Distribution System (EDS) is an open-source tool developed by Peeramid Labs to create a decentralized, verifiable, and modular contract management system. Developers can register and publish contracts on-chain, allowing others to reuse these verified contract modules directly — without having to compile them with other imported contracts. Through a combination of CodeIndex (ERC-7744) and the GETCONTRACT opcode (EIP-7784), it enables indexing and reuse of contract modules, which sounds quite convenient.

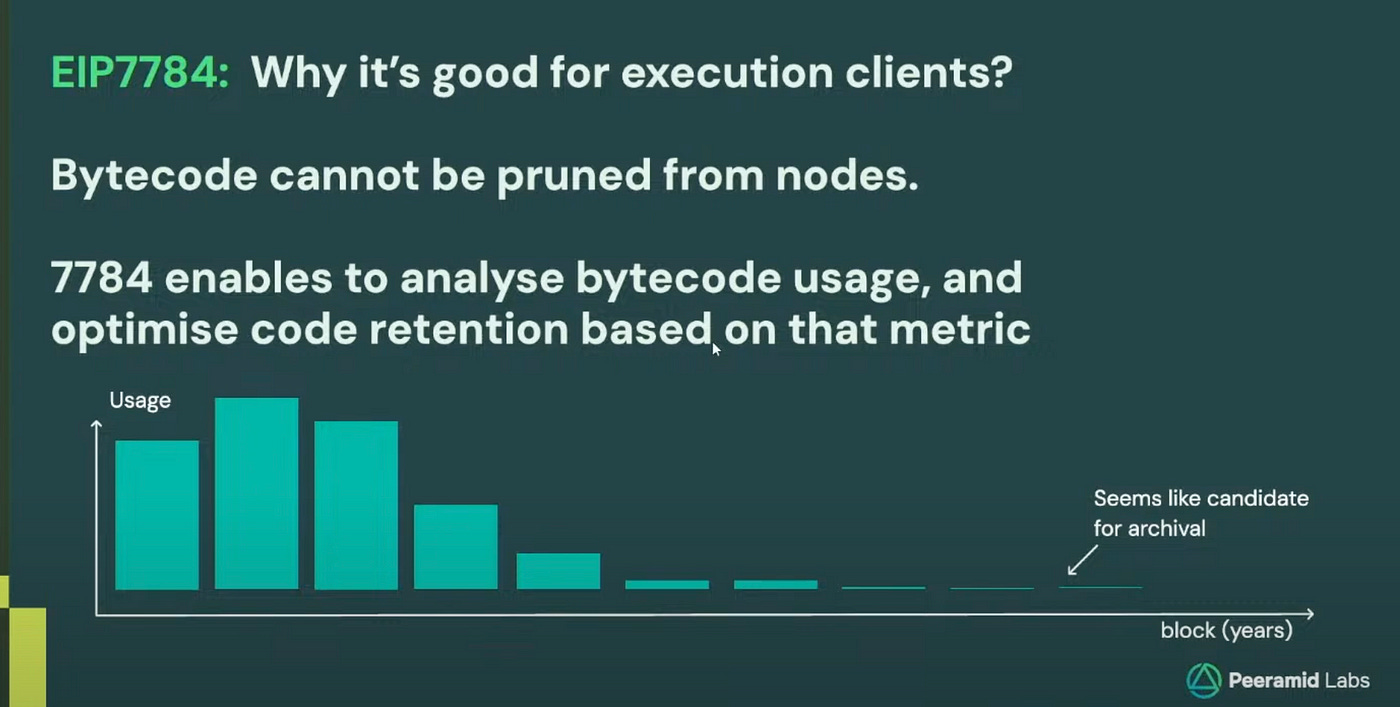

Advantage

Currently, bytecode cannot be pruned from nodes. Even smart contracts that haven’t been used in years still have their bytecode stored on-chain, consuming storage resources. EIP-7784 establishes a standardized reference mechanism based on bytecode hashes, which enables tracking of how frequently each bytecode is actually used. Based on usage metrics, it can help determine which bytecode can be compressed or archived.

Reference:

4.4. Validation Centric Design: agile and safe development for devs, an interchangeable AA wallet for users

This session was presented by Nic from imToken Labs, sharing best practices for account abstraction (AA) wallets. The Validation-Centric Design approach simplifies contract structure by decoupling complex functionality from the account contract, reducing maintenance costs and improving user experience. Although challenges remain, such as gas fee payments and transaction visibility, this design offers greater flexibility for developers and delivers a more secure and convenient wallet experience for users.

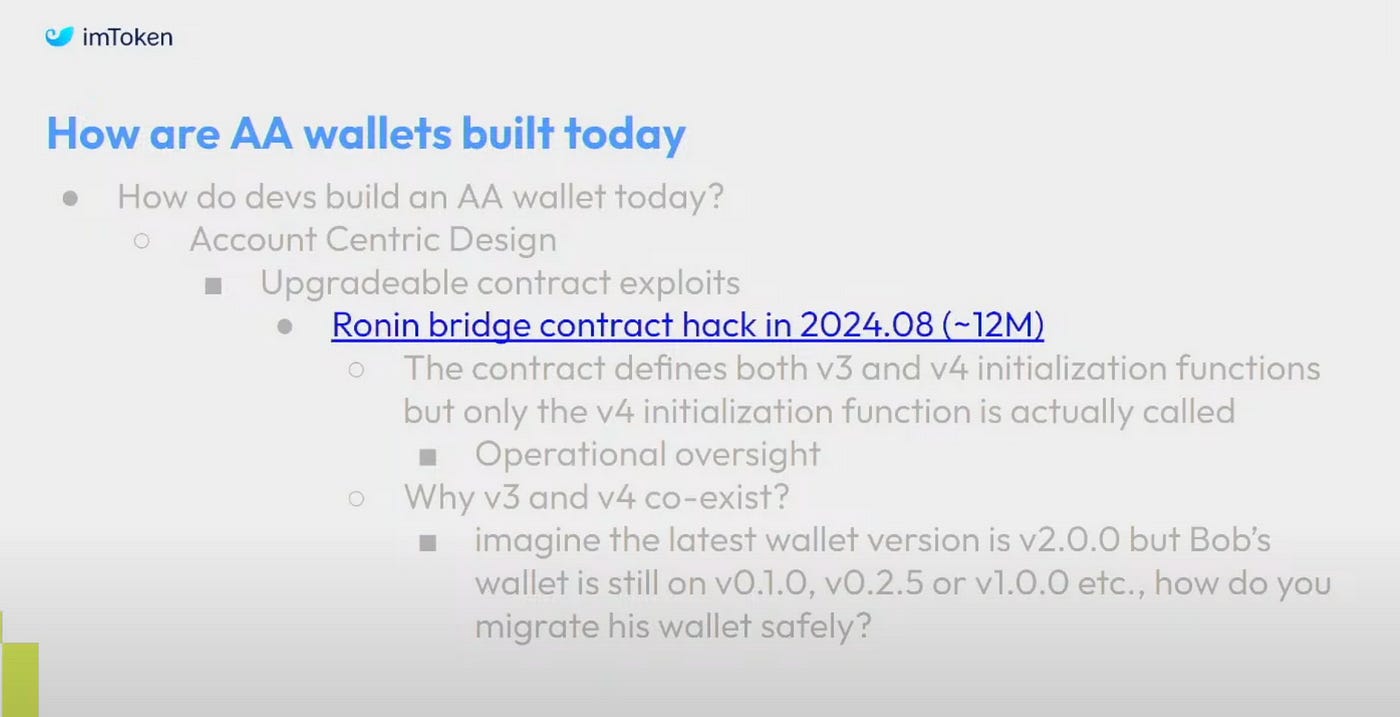

Problems with Traditional AA Wallets

Most current AA wallets follow an Account-Centric Design, where all functions such as validation logic, payment logic, and recovery mechanisms are tightly bound to the account contract.

This leads to several issues:

Wallet Switching: When users switch wallets, they must recreate the account and manually transfer assets, a process that is cumbersome and carries the risk of asset loss.

Contract Upgrades: The upgrade process is complex and prone to errors. For example, in 2024, the Ronin Bridge suffered a contract upgrade mishap that resulted in a loss of around $12 million.

The issue stemmed from having both v3 and v4 versions of initialization parameters — only v4 was correctly executed, while v3 was not, leading to vulnerabilities.

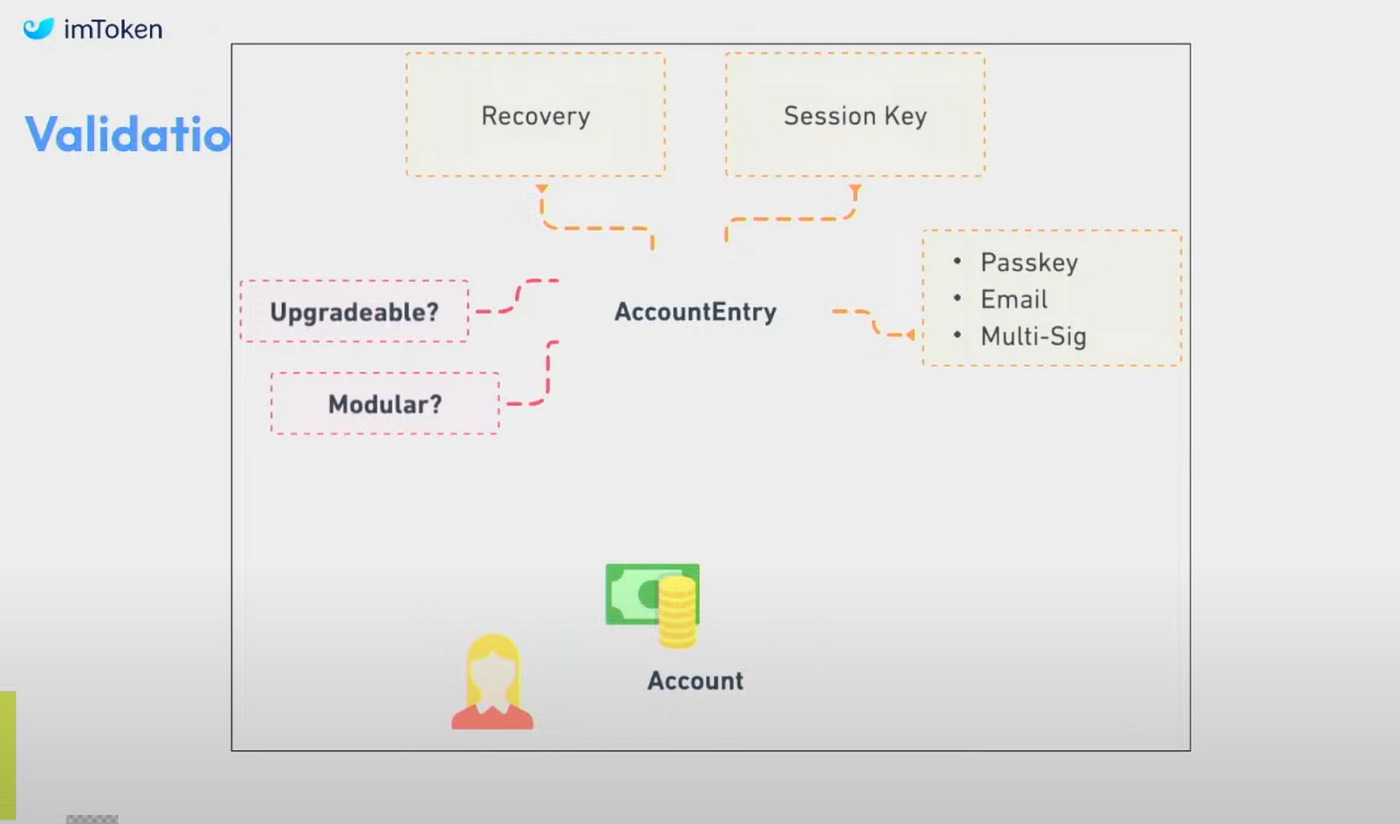

Validation-Centric Design

Validation-Centric Design separates complex functionalities — such as verification, payment, and upgrades — from the account contract, leaving only basic functionalities like asset storage and DApp interaction within the account itself. All other complex logic is consolidated into an AccountEntry contract, which acts as an AccountEntry for the account contract, responsible for handling more advanced operations.

What are the benefits of this approach?

Developers can deploy new contracts at any time without migrating user accounts.

It allows for rapid adaptation to new standards or frameworks.

Users remain unaware of backend changes, resulting in a smoother and more consistent user experience.

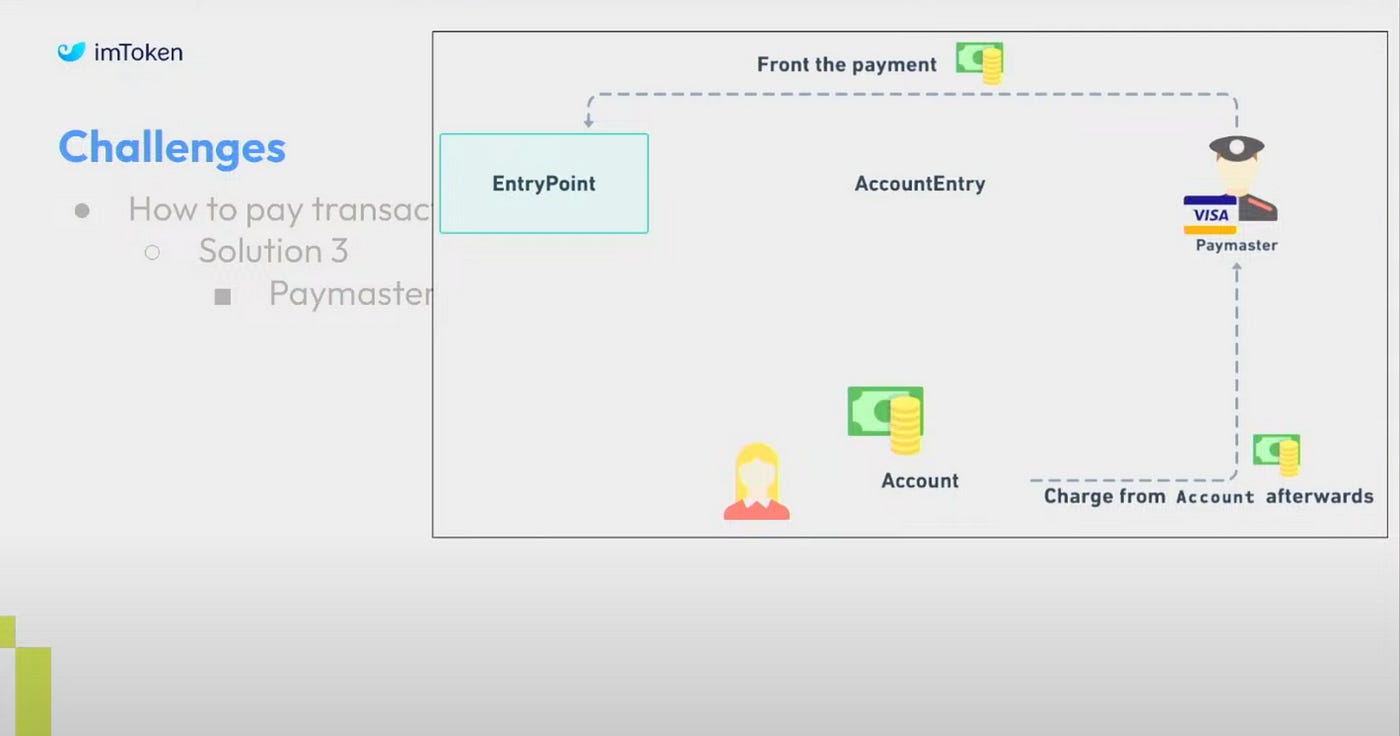

How is Gas Paid?

Since the AccountEntry contract initiates transactions but does not hold user funds, paying gas fees becomes one of the first challenges when using this architecture. Nic proposed three solutions:

Deposit user funds into the AccountEntry contract.

Transfer funds from the account contract to AccountEntry during the transaction.

Use a Paymaster to prepay the gas fee, then deduct the cost from the user’s account afterward — this is the method currently used by imToken.

Reference:

5. Blockchain Security

5.1. Exploring AI’s Role in Smart Contract Security

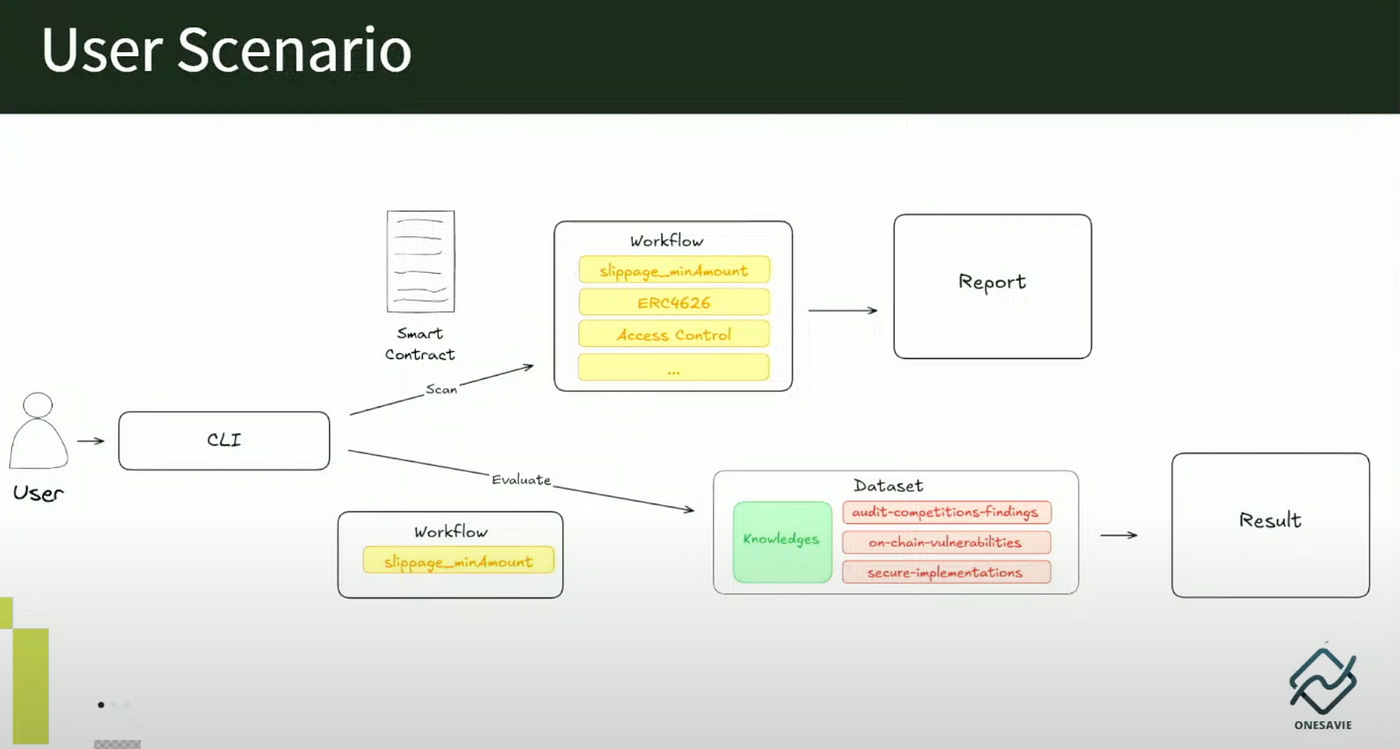

This session was presented by Alice and Daky from OneSavie Lab, sharing how to use AI tools to audit smart contracts. When auditing smart contracts, a significant amount of time is often spent understanding the codebase and identifying low-severity vulnerabilities. With the integration of AI, this process could potentially be improved. For security experts, AI can help them focus more on identifying high-severity, logic-related vulnerabilities rather than wasting time on low-risk issues. For general developers, such tools can be used to quickly scan contracts for potential issues, allowing them to detect and fix risks early.

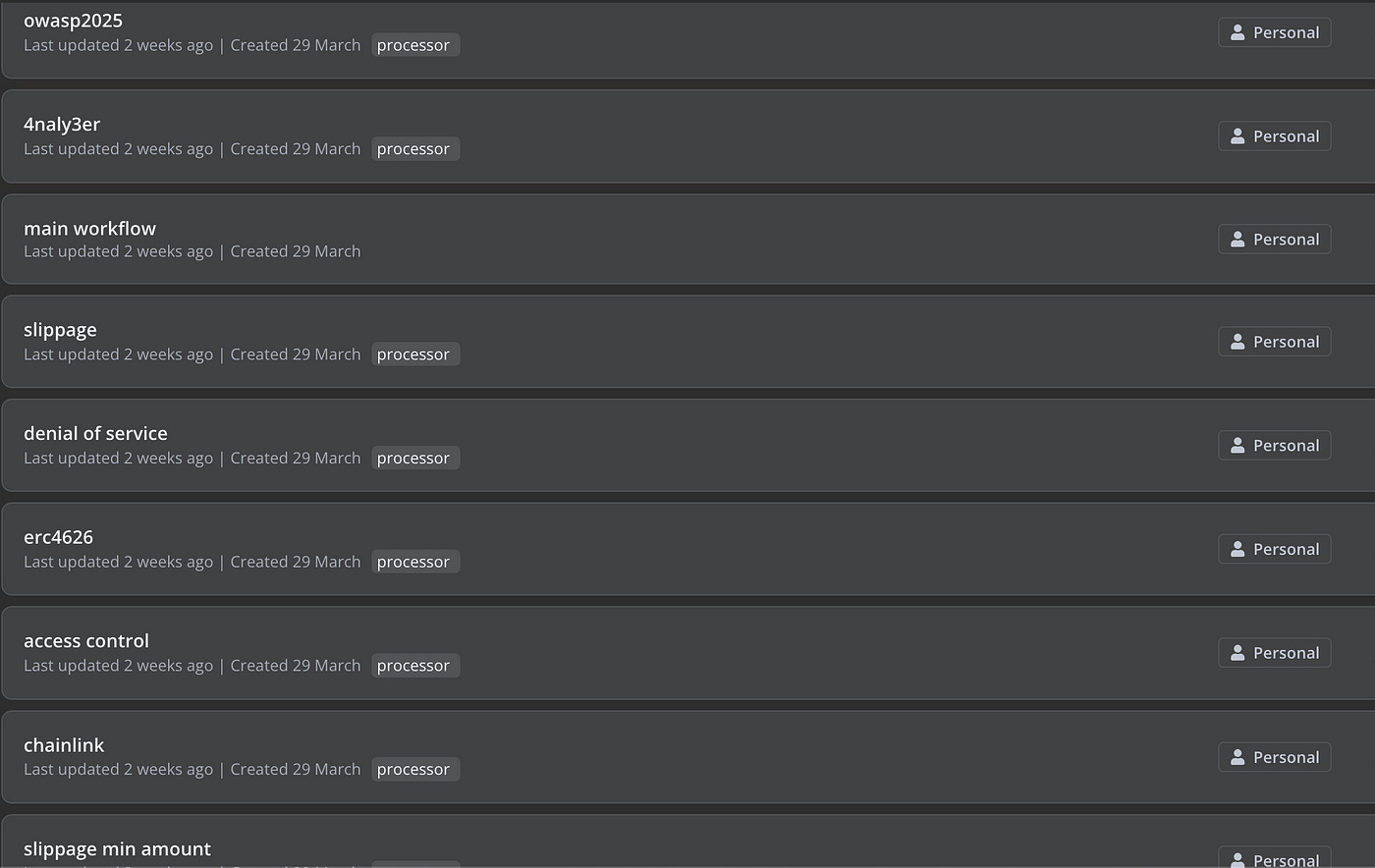

During the session, they introduced their open-source AI auditing tool, Bastet. Bastet is designed to identify common vulnerability types in DeFi, including on-chain examples and medium to high risk issues found in audit competitions. It also provides secure implementation suggestions. The goal is to help developers and researchers better understand vulnerability patterns and follow security best practices.

Bastet offers two modes: The first mode is Scan, which can be used to scan smart contracts for vulnerabilities and generate reports. The second mode is Evaluate, which helps verify the accuracy of a workflow — specifically, whether it can correctly identify vulnerabilities.

In this open-source tool, users can add their own datasets and workflows to build a customized AI-based scanning tool. Within Bastet’s n8n workflow, multiple rules are provided, allowing users to select based on their specific needs. This high level of flexibility ensures that users can precisely tailor automated workflows to suit their individual requirements.

AI auditing can certainly save a significant amount of manual effort, but I believe it cannot fully replace human auditors. Some auditors use tools like ChatGPT or Cursor to quickly identify vulnerabilities and generate PoCs, but there’s still a long way to go before AI can precisely detect flaws. After all, the root cause of vulnerabilities often lies in the complexity of logic, design, and system interactions — areas that require deep domain knowledge and contextual understanding, which AI still struggles to grasp fully.

Moreover, when it comes to risk assessment, prioritizing vulnerabilities, and providing remediation suggestions, human experience and judgment remain irreplaceable. The ideal path forward is human–AI collaboration: allowing AI to accelerate the review process and reduce repetitive tasks, while humans focus on strategic and critical vulnerability analysis to achieve maximum effectiveness. I’m looking forward to how this space evolves in the future.

Reference:

5.2. Empowering Everyone: Taking Specialization Out Of Formal Methods

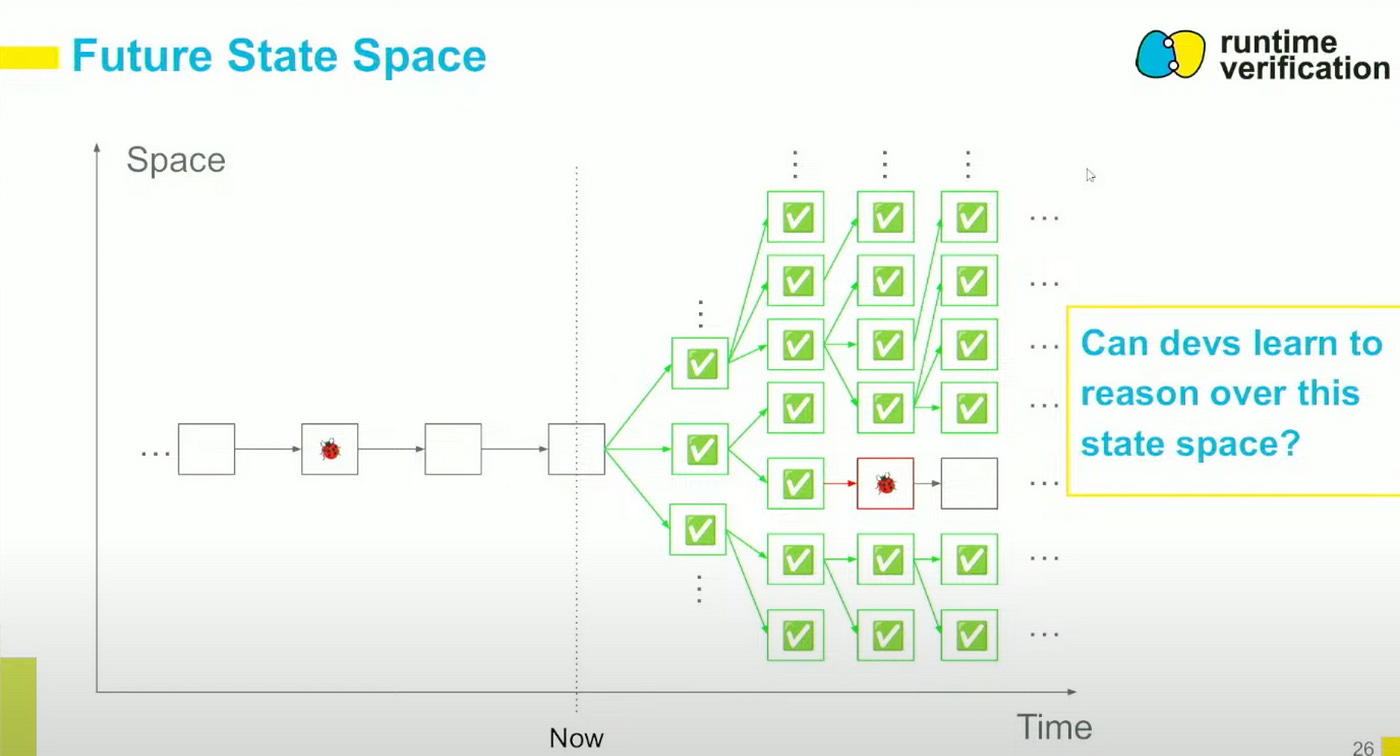

This session was presented by Daniel Cumming from Runtime Verification Inc., where he shared how to use formal methods to verify smart contracts. I’ve recently been exploring Certora’s formal verification tools, so I was also curious about how Runtime Verification differs from Certora.

Certora is more suitable for large-scale projects or teams with sufficient funding. In 2025, Certora open-sourced their verification tool — Prover, which is primarily designed for experienced auditors or companies to write strict logical verification rules.

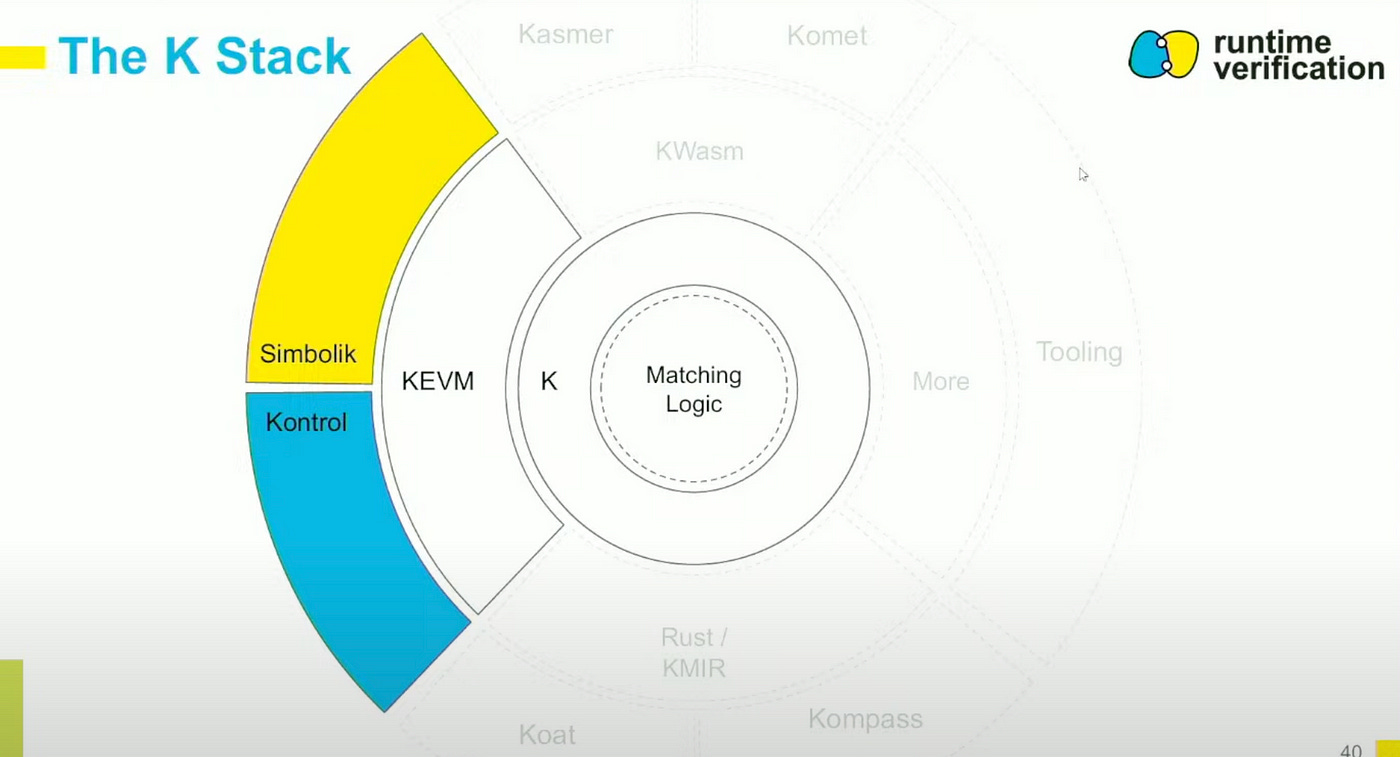

In contrast, Runtime Verification focuses on tools that are developer-friendly, allowing verification to be integrated directly into the development workflow. Using tools like Kontrol and Simbolik, developers can quickly verify logic and debug issues. These tools can be integrated into CI pipelines, enabling tight integration with the development process.

Runtime Verification offers two main tools:

Simbolik: A symbolic debugger designed specifically for Solidity developers, integrated with Visual Studio Code. It allows developers to monitor contract behavior during execution to ensure it adheres to expected specifications.

Kontrol: A formal verification tool built for EVM smart contracts, aimed at simplifying the complex verification process. It enables developers to use their existing Foundry tests as formal specifications, eliminating the need to learn new languages or tools.

The core of Runtime Verification’s K Stack architecture is the K Framework and its Matching Logic, which provide a formal semantic foundation. At the middle layer, KEVM — an EVM specification implemented in K — enables precise verification of smart contract logic, ensuring mathematical correctness in smart contract execution.

The outer tools, Simbolik and Kontrol, correspond to the development and verification stages of smart contract workflows:

• Simbolik offers real-time symbolic debugging for developers.

• Kontrol converts test cases into formal specifications, enabling automated verification of a contract’s security and logical soundness.

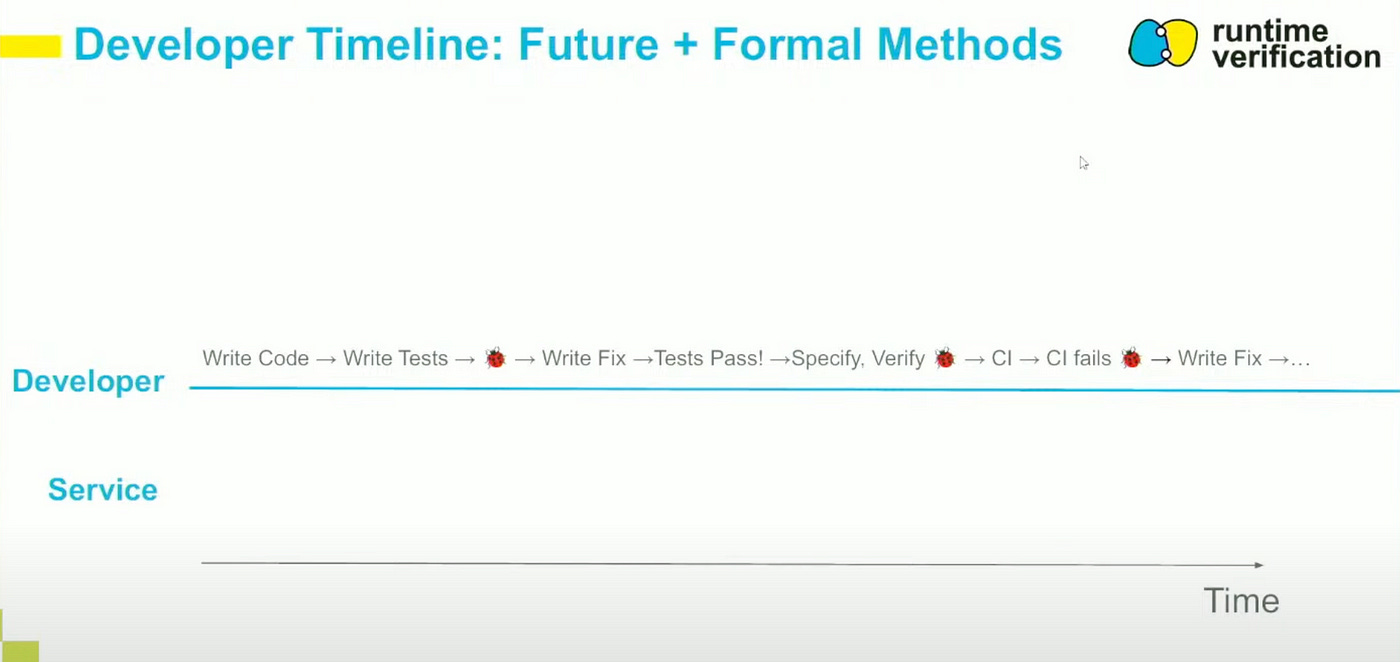

Runtime Verification can be integrated into CI pipelines, allowing developers to validate contract logic in real time during development and fix issues early. Traditionally, vulnerability discovery is handled by auditing firms after contract development is complete, often requiring back-and-forth and significant time for audits and fixes. In contrast, Runtime Verification provides a proactive alternative — enabling developers to write and verify specifications themselves during development, and catch vulnerabilities early in the process.

Reference:

https://runtimeverification.com/

Postscript:

The first ETHGlobal event of the year was held in Taipei, co-hosted with ETHTaipei. The first two days featured ETHTaipei sessions, followed by ETHGlobal’s Pragma program, and the final three days were dedicated to the ETHGlobal hackathon — making it a unique kind of blockchain week that was truly exciting.

Beyond the main sessions, ETHTaipei also hosted a Happy Hour featuring a traditional Taiwanese banquet, adding a distinctly local flavor to the event. There, I also had the chance to meet many community members from DeFiHackLabs.

Looking back on this week of blockchain events, it was incredibly rewarding. Learning and sharing have always been the foundation of the security community, and I look forward to seeing more open-source tools, more transparent audits, and more protocols that implement security by design.

The challenges ahead will only grow, and it’s up to us to build a verifiable, composable, and trustworthy Web3 security ecosystem — together.

# ETHTaipei # ETHGlobal # DeFiHackLabs